Cognition, Convexity, and Category Theory

Posted by John Baez

guest post by Tai-Danae Bradley and Brad Theilman

Recently in the Applied Category Theory Seminar our discussions have returned to modeling natural language, this time via Interacting Conceptual Spaces I by Joe Bolt, Bob Coecke, Fabrizio Genovese, Martha Lewis, Dan Marsden, and Robin Piedeleu. In this paper, convex algebras lie at the heart of a compositional model of cognition based on Peter Gärdenfors’ theory of conceptual spaces. We summarize the ideas in today’s post.

Sincere thanks go to Brendan Fong, Nina Otter, Fabrizio Genovese, Joseph Hirsh, and other participants of the seminar for helpful discussions and feedback.

Introduction

A few weeks ago here at the Café, Cory and Jade summarized the main ideas behind the DisCoCat model, i.e. the categorical compositional distributional model of meaning developed in a 2010 paper by Coecke, Sadrzadeh, and Clark. Within the comments section of that blog entry, Coecke noted that the DisCoCat model is essentially a grammatical quantum field theory — a functor (morally) from a pregroup to finite dimensional real vector spaces. In this model, the meaning of a sentence is determined by the meanings of its constituent parts, which are themselves represented as vectors with meanings determined statistically. But as he also noted,

Vector spaces are extremely bad at representing meanings in a fundamental way, for example, lexical entailment, like tiger < big cat < mammal < animal can’t be represented in a vector space. At Oxford we are now mainly playing around with alternative models of meaning drawn from cognitive science, psychology and neuroscience. Our Interacting Conceptual Spaces I is an example of this….

This (ICS I) is the paper that we discuss in today’s blog post. It presents a new model in which words are no longer represented as vectors. Instead, they are regions within a conceptual space, a term coined by cognitive scientist Peter Gärdenfors in Conceptual Spaces: The Geometry of Thought. A conceptual space is a combination of geometric domains where convexity plays a key role. Intuitively, if we have a space representing the concept of fruit, and if two points in this space represent banana, then one expects that every point “in between” should also represent banana. The goal of ICS I is to put Gärdenfors’ idea on a more formal categorical footing, all the while adhering to the main principles of the DisCoCat model. That is, we still consider a functor out of a grammar category, namely the pregroup , freely generated by noun type and sentence type . (But in light of Preller’s argument as mentioned previously, we use the word functor with caution.) The semantics category, however, is no longer vector spaces but rather the category of convex algebras and convex relations. We make these ideas and definitions precise below.

Preliminaries

A convex algebra is, loosely speaking, a set equipped with a way of taking formal finite convex combinations of its elements. More formally, let be a set and let denote the set of formal finite sums of elements of where and (We emphasize that this sum is formal. In particular, need not be equipped with a notion of addition or scaling.) A convex algebra is a set together with a function , called a “mixing operation,” that is well-behaved in the following sense:

- the convex combination of a single element is itself, and

- the two ways of evaluating a convex combination of a convex combination are equal.

For example, every convex subspace of is naturally a convex algebra. (And we can’t resist mentioning that convex subspaces of are also examples of algebras over the operad of topological simplices. But as we learned through a footnote in Tobias Fritz’s Convex Spaces I, it’s best to stick with monads rather than operads. Indeed, a convex algebra is an Eilenberg-Moore algebra of the finite distribution monad.) Join semilattices also provide an example of convex algebras. A finite convex combination of elements in the lattice is defined to be the join of those elements having non-zero coefficients: . (In particular, the coefficients play no role on the right-hand side.)

Given two convex algebras and , a convex relation is a binary relation that respects convexity. That is, if for all then . We then define to be the category with convex algebras as objects and convex relations as morphisms. Composition and identities are as for usual binary relations.

Now since in this model, the category of vector spaces is being replaced by , one hopes that (in keeping with the spirit of the DisCoCat model), the latter admits a symmetric monoidal compact closed structure. Indeed it does.

- has a symmetric monoidal structure given by the Cartesian product: We use to denote the set equipped with mixing operation given by The monoidal unit is the one-point set which has a unique convex algebra structure. We’ll denote this convex algebra by

- Each object in is self-dual, and cups and caps are given as follows:

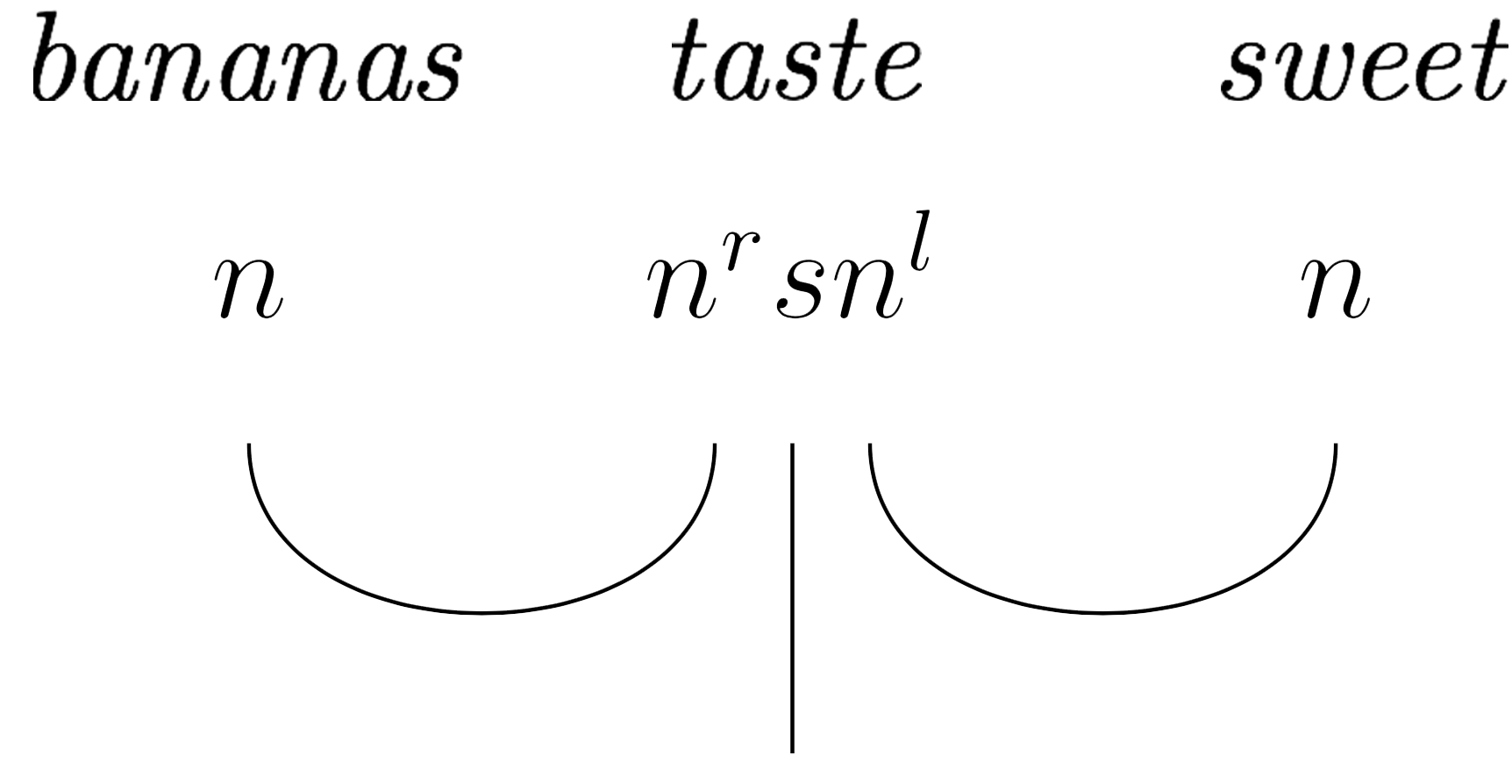

The compact closed structure guarantees that fits nicely into the DisCoCat framework: words in a sentence are assigned types according to a chosen pregroup grammar, and a sentence is deemed grammatical if it reduces to type . Moreover, these type reductions in give rise to corresponding morphisms in where the meaning of the sentence can be determined. We’ll illustrate this below by computing the meaning of the sentence

To start, note that this sentence is comprised of three grammar types:

and each corresponds to a different conceptual space which we describe next.

Computing Meaning

The Noun Space

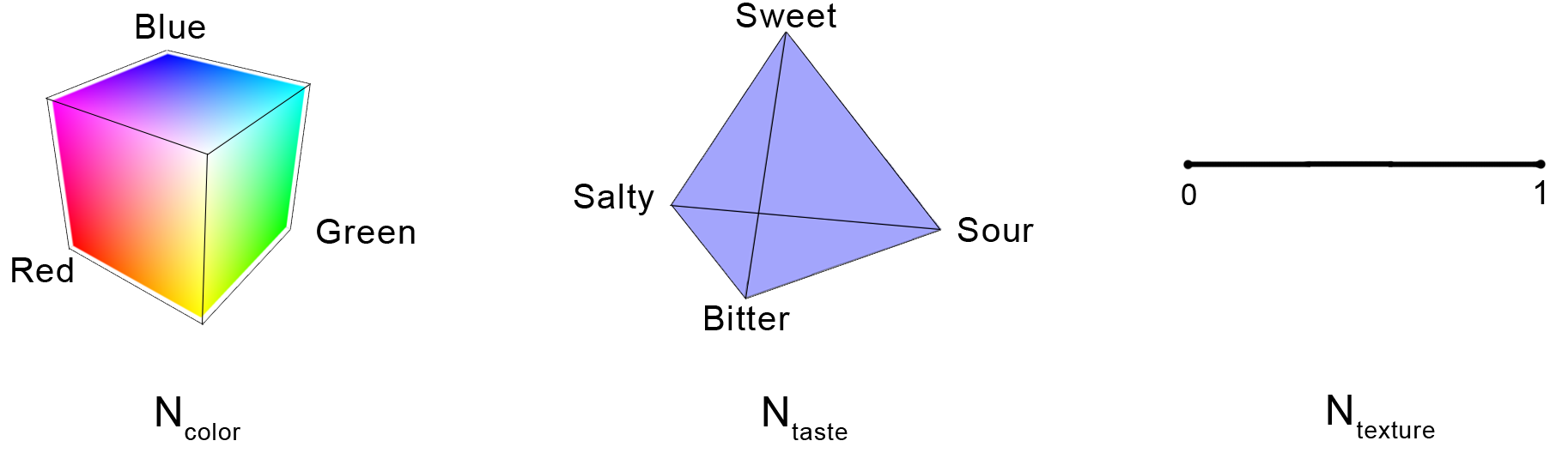

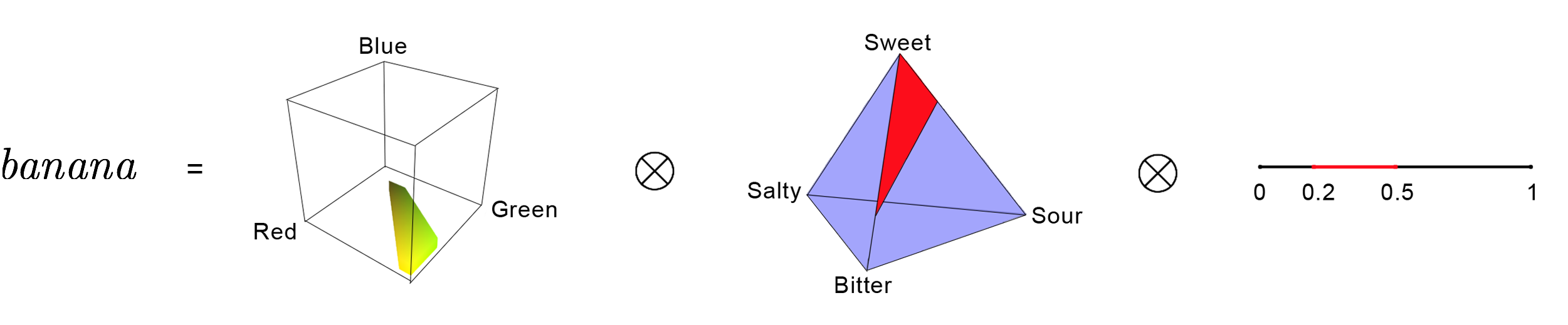

A noun is a state , i.e. a convex subset of the noun space . Restricting our attention to food nouns, the space is a product of color, taste, and texture domains: where

- is the RGB color cube, i.e. the set of all triples .

- is the taste tetrahedron, i.e. the convex hull of four basic tastes: sweet, sour, bitter, and salty.

- is the unit interval where 0 represents liquid and 1 represents solid.

The noun banana is then a product of three convex subregions of :

That is, banana is the product of a yellow/green region, the convex hull of three points in the taste tetrahedron, and a subinterval of the texture interval. Other foods and beverages, avocados, chocolate, beer, etc. can be expressed similarly.

The Sentence Space

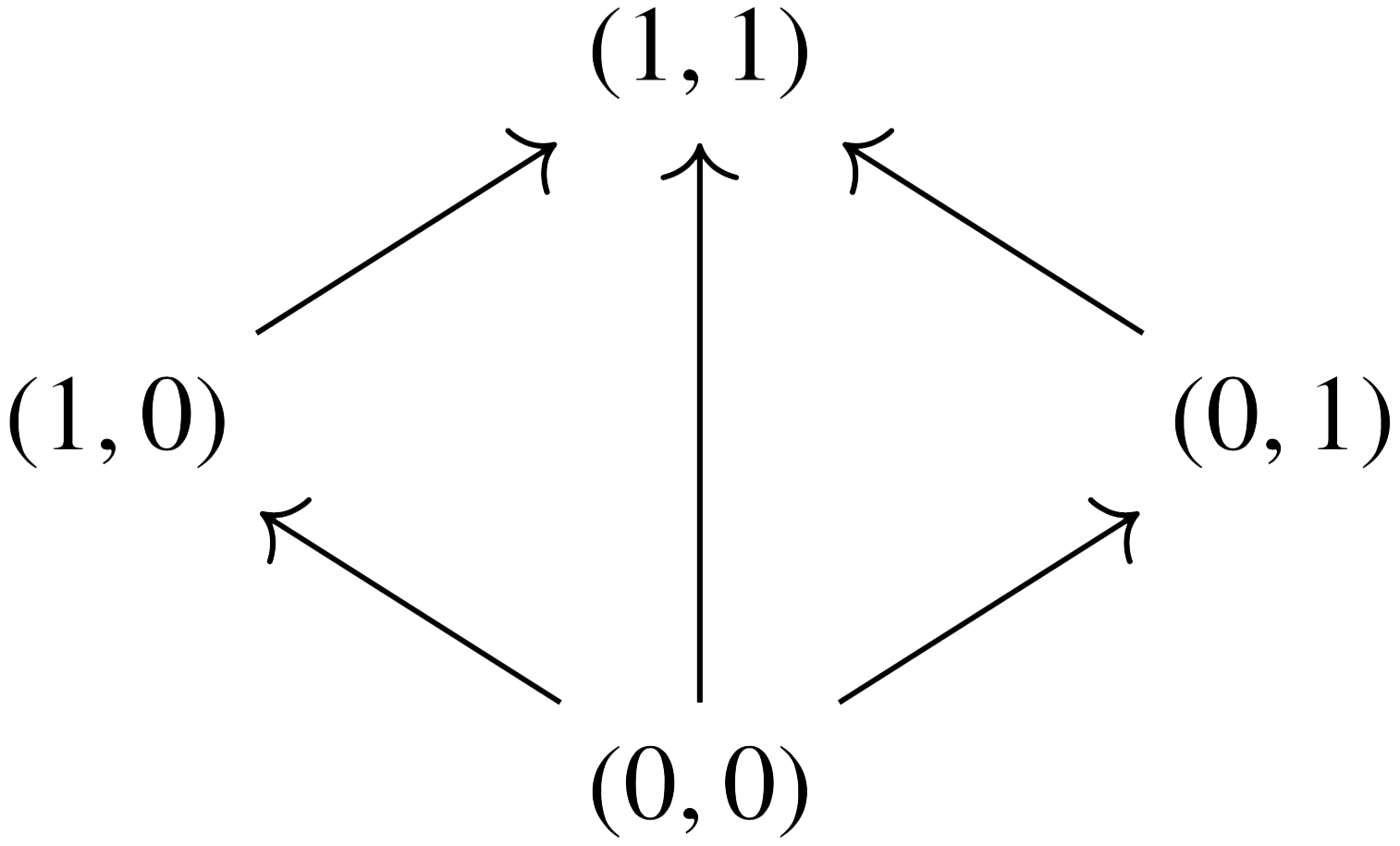

The meaning of a sentence is a convex subset of a sentence space . Here, is chosen as a simple-yet-sensible space to capture one’s experience when eating and drinking. It is the join semilattice on four points

where in the first component, 0 = negative and 1 = positive, while in the second component, 0 = not surprising and 1 = surprising. For instance, represents negative and surprising while the convex subset represents positive.

The Verb Space

A transitive verb is a convex subset of . For instance, if we suppose momentarily that we live in a world in which one can survive on bananas and beer alone, then the verb taste can be represented by where Conv denotes the convex hull of the argument. Here, green is an intersective adjective, so green banana is computed by taking the intersection of the banana space with the green region of the color cube. Likewise for yellow banana.

Tying it all together

Finally, we compute the meaning of bananas taste sweet, which has grammar type reduction In , this corresponds to the following morphism: Note that the rightmost selects subsets of the taste space that include sweet things and then deletes “sweet.” The leftmost selects subsets of the taste space that include banana and then deletes “banana.” We are left with a convex subset of , i.e. the meaning of the sentence.

Closing Remarks

Although not shown in the example above, one can also account for relative pronouns using certain morphisms called multi-wires or spiders (these arise from commutative special dagger Frobenius structures). The authors also give a toy example from the non-food world by modeling movement of a person from one location to another, using time and space to define new noun, sentence, and verb spaces.

In short, the conceptual spaces framework seeks to capture meaning in a way that resembles human thought more closely than the vector space model. This leaves us to puzzle over a couple of questions: 1) Do all concepts exhibit a convex structure? and 2) How might the conceptual spaces framework be implemented experimentally?

Re: Cognition, Convexity, and Category Theory

Nice!

So why is it best to stick with the monad rather than work with Tom Leinster’s operad in this situation? I’m too lazy to find the footnote in Tobias Fritz’s paper.

I like Tom’s operad , whose operations are convex linear combinations:

I think that the surprising theorem relating Tom’s operad and Shannon information (also called Shannon entropy), together with material you’re discussing on convex sets and ‘meaning’, might reveal an interesting new link between information and meaning.