Categories in Control

Posted by John Baez

To understand ecosystems, ultimately will be to understand networks. - B. C. Patten and M. Witkamp

A while back I decided one way to apply my math skills to help save the planet was to start pushing toward green mathematics: a kind of mathematics that can interact with biology and ecology just as fruitfully as traditional mathematics interacts with physics. As usual with math, the payoffs will come slowly, but they may be large. It’s not a substitute for doing other, more urgent things—but if mathematicians don’t do this, who will?

As a first step in this direction, I decided to study networks.

This May, a small group of mathematicians is meeting in Turin for a workshop on the categorical foundations of network theory, organized by Jacob Biamonte. I’m trying to get us mentally prepared for this. We all have different ideas, yet they should fit together somehow.

Tobias Fritz, Eugene Lerman and David Spivak have all written articles here about their work, though I suspect Eugene will have a lot of completely new things to say, too. Now I want to say a bit about what I’ve been doing with Jason Erbele.

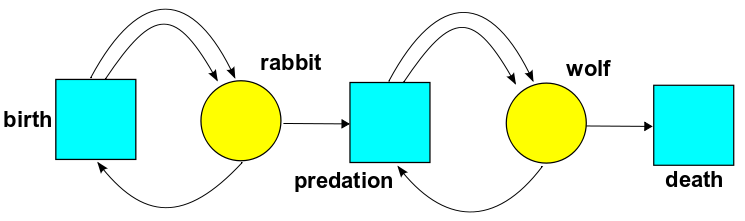

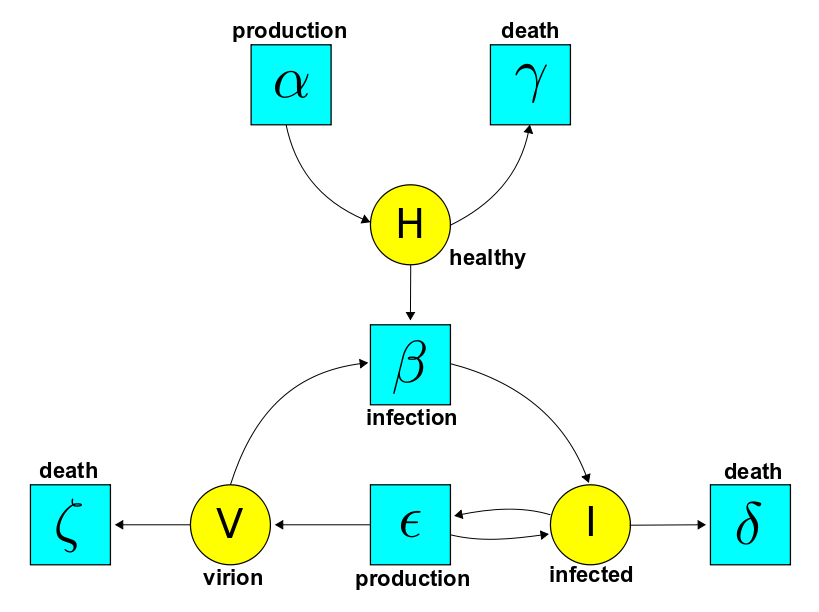

Despite my ultimate aim of studying biological and ecological networks, I decided to start by clarifying the math of networks that appear in chemistry and engineering, since these are simpler, better understood, useful in their own right, and probably a good warmup for the grander goal. I’ve been working with Brendan Fong on electrical ciruits, and with Jason Erbele on control theory. Let me talk about this paper:

• John Baez and Jason Erbele, Categories in control.

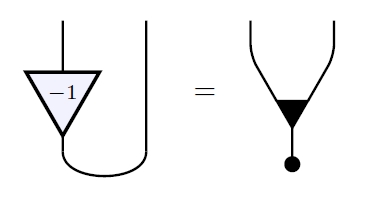

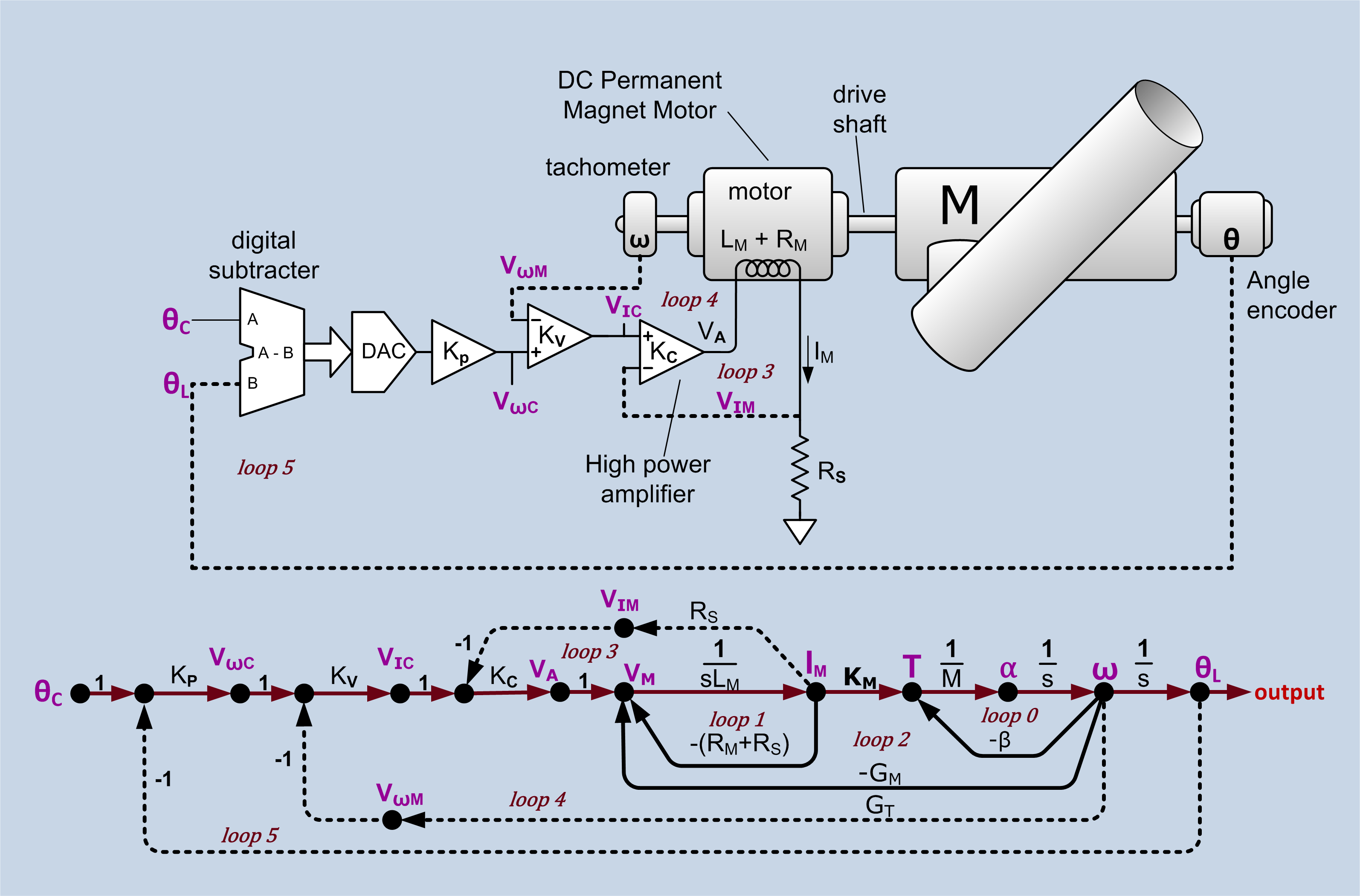

Control theory is the branch of engineering that focuses on manipulating open systems—systems with inputs and outputs—to achieve desired goals. In control theory, signal-flow diagrams are used to describe linear ways of manipulating signals, for example smooth real-valued functions of time. Here’s a real-world example; click the picture for more details:

For a category theorist, at least, it is natural to treat signal-flow diagrams as string diagrams in a symmetric monoidal category. This forces some small changes of perspective, which I’ll explain, but more important is the question: which symmetric monoidal category?

We argue that the answer is: the category of finite-dimensional vector spaces over a certain field but with linear relations rather than linear maps as morphisms, and direct sum rather than tensor product providing the symmetric monoidal structure. We use the field consisting of rational functions in one real variable This variable has the meaning of differentation. A linear relation from to is thus a system of linear constant-coefficient ordinary differential equations relating ‘input’ signals and ‘output’ signals.

Our main goal in this paper is to provide a complete ‘generators and relations’ picture of this symmetric monoidal category, with the generators being familiar components of signal-flow diagrams. It turns out that the answer has an intriguing but mysterious connection to ideas that are familiar in the diagrammatic approach to quantum theory! Quantum theory also involves linear algebra, but it uses linear maps between Hilbert spaces as morphisms, and the tensor product of Hilbert spaces provides the symmetric monoidal structure.

We hope that the category-theoretic viewpoint on signal-flow diagrams will shed new light on control theory. However, in this paper we only lay the groundwork.

Signal flow diagrams

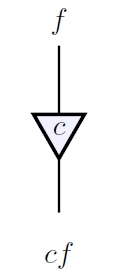

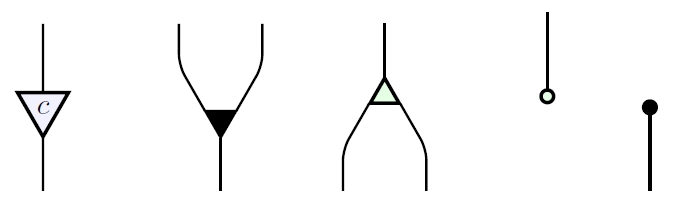

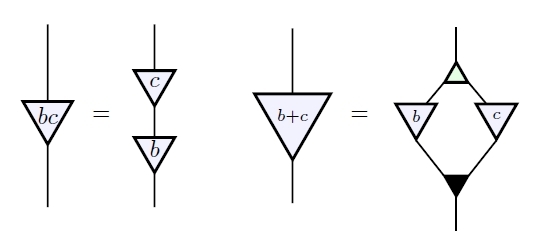

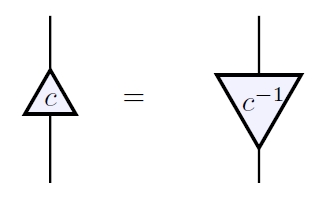

There are several basic operations that one wants to perform when manipulating signals. The simplest is multiplying a signal by a scalar. A signal can be amplified by a constant factor:

where We can write this as a string diagram:

Here the labels and on top and bottom are just for explanatory purposes and not really part of the diagram. Control theorists often draw arrows on the wires, but this is unnecessary from the string diagram perspective. Arrows on wires are useful to distinguish objects from their duals, but ultimately we will obtain a compact closed category where each object is its own dual, so the arrows can be dropped. What we really need is for the box denoting scalar multiplication to have a clearly defined input and output. This is why we draw it as a triangle. Control theorists often use a rectangle or circle, using arrows on wires to indicate which carries the input and which the output

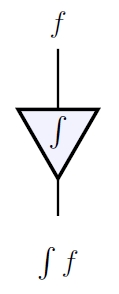

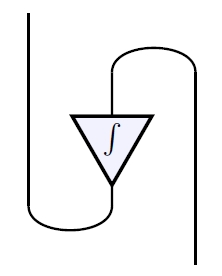

A signal can also be integrated with respect to the time variable:

Mathematicians typically take differentiation as fundamental, but engineers sometimes prefer integration, because it is more robust against small perturbations. In the end it will not matter much here. We can again draw integration as a string diagram:

Since this looks like the diagram for scalar multiplication, it is natural to extend to the field of rational functions of a variable which stands for differentiation. Then differentiation becomes a special case of scalar multiplication, namely multiplication by and integration becomes multiplication by Engineers accomplish the same effect with Laplace transforms, since differentiating a signal is equivalent to multiplying its Laplace transform

by the variable Another option is to use the Fourier transform: differentiating is equivalent to multiplying its Fourier transform

by Of course, the function needs to be sufficiently well-behaved to justify calculations involving its Laplace or Fourier transform. At a more basic level, it also requires some work to treat integration as the two-sided inverse of differentiation. Engineers do this by considering signals that vanish for and choosing the antiderivative that vanishes under the same condition. Luckily all these issues can be side-stepped in a formal treatment of signal-flow diagrams: we can simply treat signals as living in an unspecified vector space over the field The field would work just as well, and control theory relies heavily on complex analysis. In our paper we work over an arbitrary field

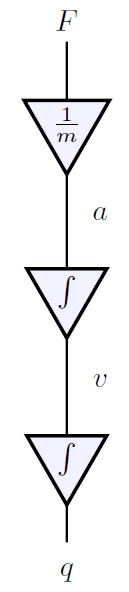

The simplest possible signal processor is a rock, which takes the 'input' given by the force on the rock and produces as 'output' the rock's position Thanks to Newton's second law we can describe this using a signal-flow diagram:

Here composition of morphisms is drawn in the usual way, by attaching the output wire of one morphism to the input wire of the next.

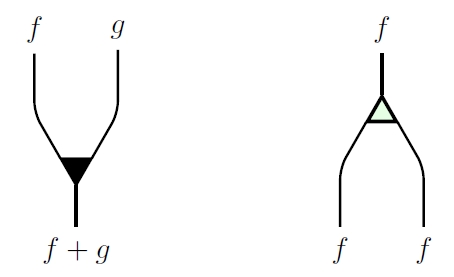

To build more interesting machines we need more building blocks, such as addition:

and duplication:

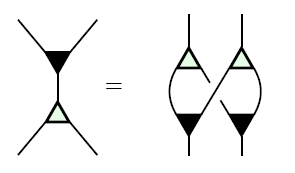

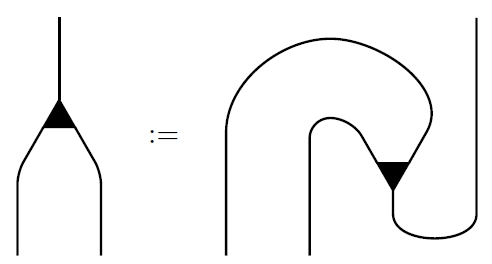

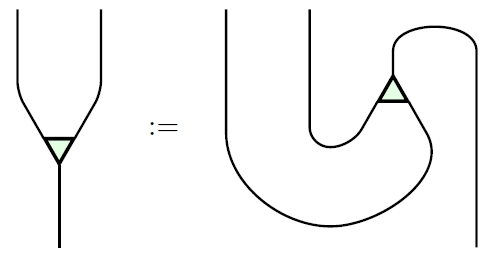

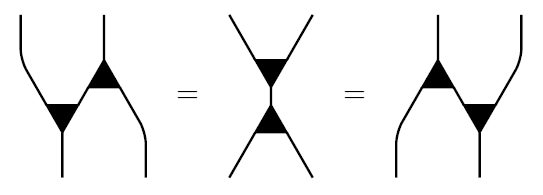

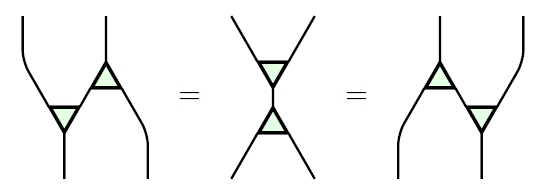

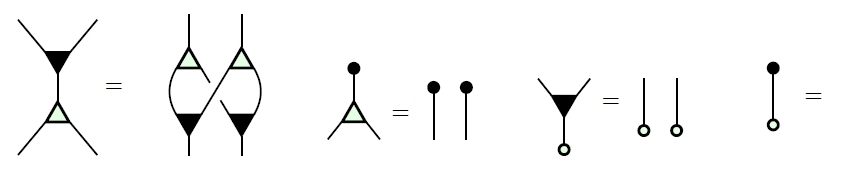

When these linear maps are written as matrices, their matrices are transposes of each other. This is reflected in the string diagrams for addition and duplication:

The second is essentially an upside-down version of the first. However, we draw addition as a dark triangle and duplication as a light one because we will later want another way to ‘turn addition upside-down’ that does not give duplication. As an added bonus, a light upside-down triangle resembles the Greek letter the usual symbol for duplication.

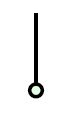

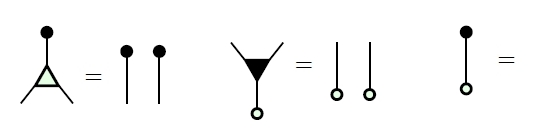

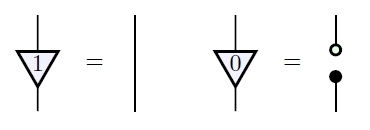

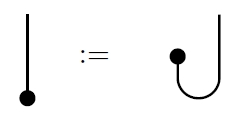

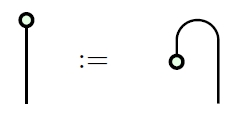

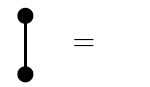

While they are typically not considered worthy of mention in control theory, for completeness we must include two other building blocks. One is the zero map from the zero-dimensional vector space to our field which we denote as and draw as follows:

The other is the zero map from to sometimes called ‘deletion’, which we denote as and draw thus:

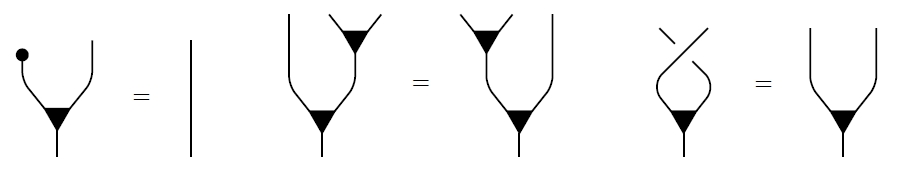

Just as the matrices for addition and duplication are transposes of each other, so are the matrices for zero and deletion, though they are rather degenerate, being and matrices, respectively. Addition and zero make into a commutative monoid, meaning that the following relations hold:

The equation at right is the commutative law, and the crossing of strands is the braiding:

by which we switch two signals. In fact this braiding is a symmetry, so it does not matter which strand goes over which:

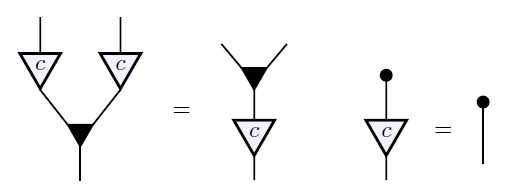

Dually, duplication and deletion make into a cocommutative comonoid. This means that if we reflect the equations obeyed by addition and zero across the horizontal axis and turn dark operations into light ones, we obtain another set of valid equations:

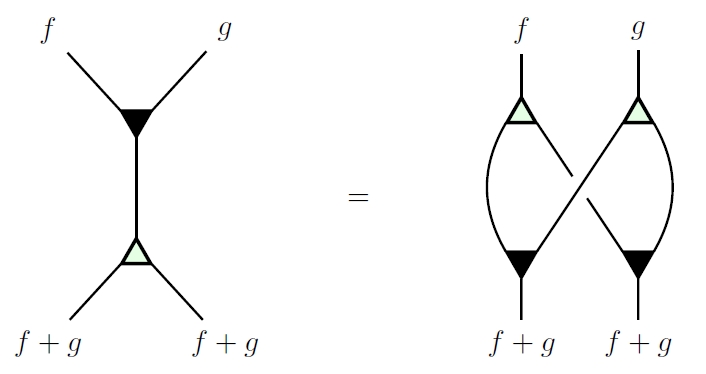

There are also relations between the monoid and comonoid operations. For example, adding two signals and then duplicating the result gives the same output as duplicating each signal and then adding the results:

This diagram is familiar in the theory of Hopf algebras, or more generally bialgebras. Here it is an example of the fact that the monoid operations on are comonoid homomorphisms—or equivalently, the comonoid operations are monoid homomorphisms.

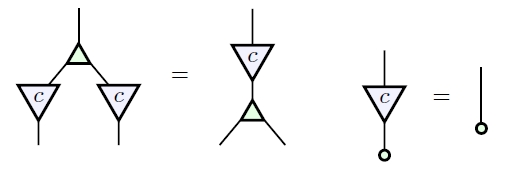

We summarize this situation by saying that is a bimonoid. These are all the bimonoid laws, drawn as diagrams:

The last equation means we can actually make the diagram at left disappear, since it equals the identity morphism on the 0-dimensional vector space, which is drawn as nothing.

So far all our string diagrams denote linear maps. We can treat these as morphisms in the category where objects are finite-dimensional vector spaces over a field and morphisms are linear maps. This category is equivalent to the category where the only objects are vector spaces for and then morphisms can be seen as matrices. The space of signals is a vector space over which may not be finite-dimensional, but this does not cause a problem: an matrix with entries in still defines a linear map from to in a functorial way.

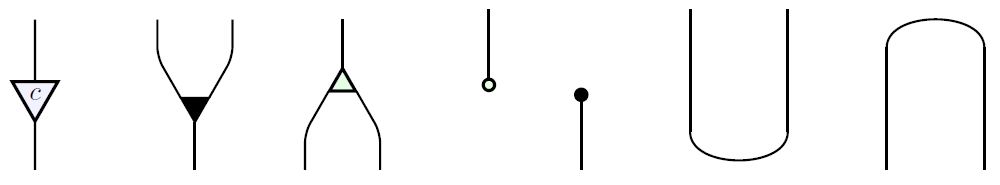

In applications of string diagrams to quantum theory, we make into a symmetric monoidal category using the tensor product of vector spaces. In control theory, we instead make into a symmetric monoidal category using the direct sum of vector spaces. In Lemma 1 of our paper we prove that for any field with direct sum is generated as a symmetric monoidal category by the one object together with these morphisms:

where is arbitrary.

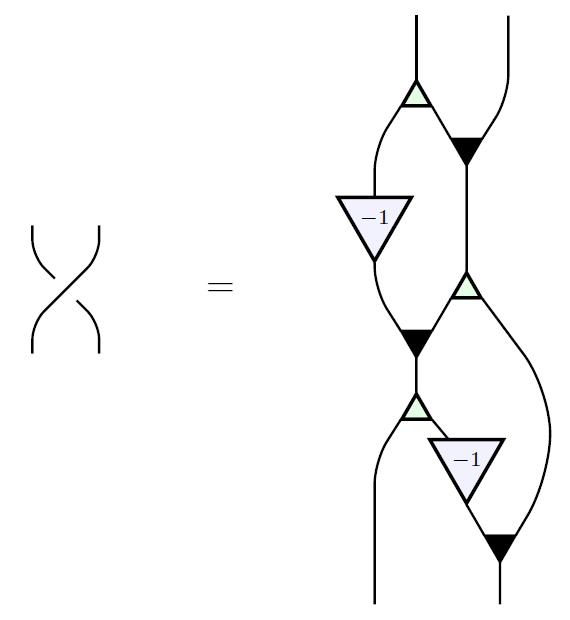

However, these generating morphisms obey some unexpected relations! For example, we have:

Thus, it is important to find a complete set of relations obeyed by these generating morphisms, thus obtaining a presentation of as a symmetric monoidal category. We do this in Theorem 2. In brief, these relations say:

(1) is a bicommutative bimonoid;

(2) the rig operations of can be recovered from the generating morphisms;

(3) all the generating morphisms commute with scalar multiplication.

Here item (2) means that and in the field can be expressed in terms of signal-flow diagrams as follows:

Multiplicative inverses cannot be so expressed, so our signal-flow diagrams so far do not know that is a field. Additive inverses also cannot be expressed in this way. So, we expect that a version of Theorem 2 will hold whenever is a mere rig: that is, a ‘ring without negatives’, like the natural numbers. The one change is that instead of working with vector spaces, we should work with finitely presented free -modules.

Item (3), the fact that all our generating morphisms commute with scalar multiplication, amounts to these diagrammatic equations:

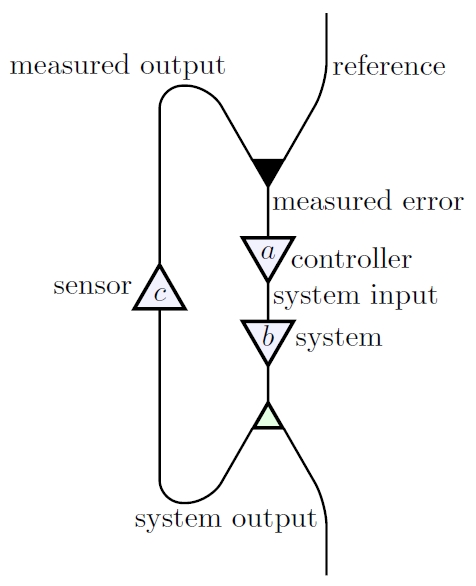

While Theorem 2 is a step towards understanding the category-theoretic underpinnings of control theory, it does not treat signal-flow diagrams that include ‘feedback’. Feedback is one of the most fundamental concepts in control theory because a control system without feedback may be highly sensitive to disturbances or unmodeled behavior. Feedback allows these uncontrolled behaviors to be mollified. As a string diagram, a basic feedback system might look schematically like this:

The user inputs a ‘reference’ signal, which is fed into a controller, whose output is fed into a system, which control theorists call a ‘plant’, which in turn produces its own output. But then the system’s output is duplicated, and one copy is fed into a sensor, whose output is added (or if we prefer, subtracted) from the reference signal.

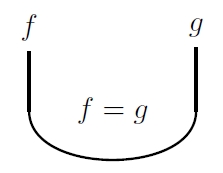

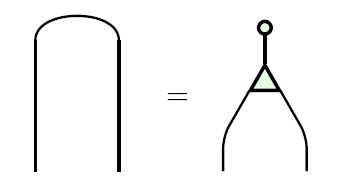

In string diagrams—unlike in the usual thinking on control theory—it is essential to be able to read any diagram from top to bottom as a composite of tensor products of generating morphisms. Thus, to incorporate the idea of feedback, we need two more generating morphisms. These are the ‘cup’:

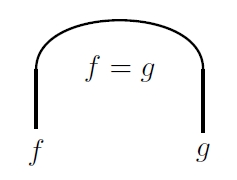

and ‘cap’:

These are not maps: they are relations. The cup imposes the relation that its two inputs be equal, while the cap does the same for its two outputs. This is a way of describing how a signal flows around a bend in a wire.

To make this precise, we use a category called An object of this category is a finite-dimensional vector space over while a morphism from to denoted is a linear relation, meaning a linear subspace

In particular, when a linear relation is just an arbitrary system of constant-coefficient linear ordinary differential equations relating input variables and output variables.

Since the direct sum is also the cartesian product of and a linear relation is indeed a relation in the usual sense, but with the property that if is related to and is related to then is related to whenever

We compose linear relations and as follows:

Any linear map gives a linear relation namely the graph of that map:

Composing linear maps thus becomes a special case of composing linear relations, so becomes a subcategory of Furthermore, we can make into a monoidal category using direct sums, and it becomes symmetric monoidal using the braiding already present in

In these terms, the cup is the linear relation

given by

while the cap is the linear relation

given by

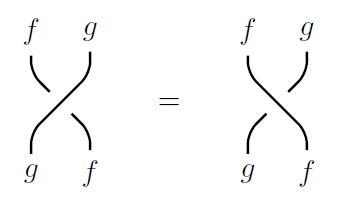

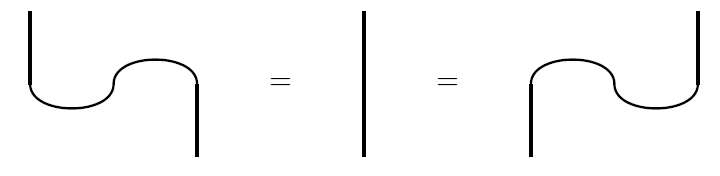

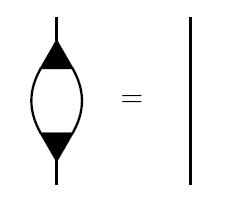

These obey the zigzag relations:

Thus, they make into a compact closed category where and thus every object, is its own dual.

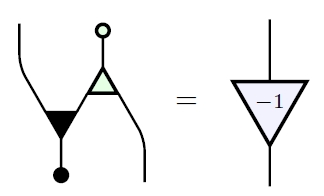

Besides feedback, one of the things that make the cap and cup useful is that they allow any morphism to be ‘plugged in backwards’ and thus ‘turned around’. For instance, turning around integration:

we obtain differentiation. In general, using caps and cups we can turn around any linear relation and obtain a linear relation called the adjoint of which turns out to given by

For example, if is nonzero, the adjoint of scalar multiplication by is multiplication by :

Thus, caps and cups allow us to express multiplicative inverses in terms of signal-flow diagrams! One might think that a problem arises when when but no: the adjoint of scalar multiplication by is

In Lemma 3 we show that is generated, as a symmetric monoidal category, by these morphisms:

where is arbitrary.

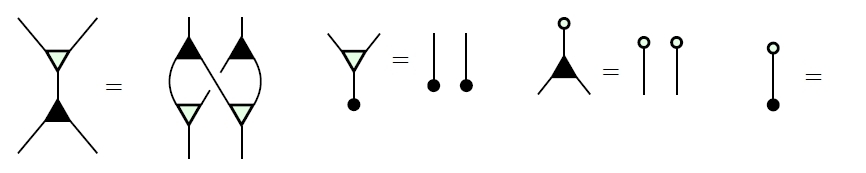

In Theorem 4 we find a complete set of relations obeyed by these generating morphisms,thus giving a presentation of as a symmetric monoidal category. To describe these relations, it is useful to work with adjoints of the generating morphisms. We have already seen that the adjoint of scalar multiplication by is scalar multiplication by except when Taking adjoints of the other four generating morphisms of we obtain four important but perhaps unfamiliar linear relations. We draw these as ‘turned around’ versions of the original generating morphisms:

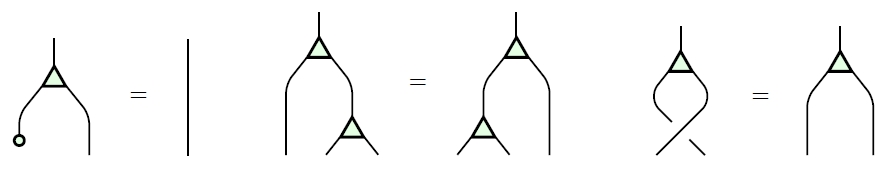

• Coaddition is a linear relation from to that holds when the two outputs sum to the input:

• Cozero is a linear relation from to that holds when the input is zero:

• Coduplication is a linear relation from to that holds when the two inputs both equal the output:

• Codeletion is a linear relation from to that holds always:

Since and automatically obey turned-around versions of the relations obeyed by and we see that acquires a second bicommutative bimonoid structure when considered as an object in

Moreover, the four dark operations make into a Frobenius monoid. This means that is a monoid, is a comonoid, and the Frobenius relation holds:

All three expressions in this equation are linear relations saying that the sum of the two inputs equal the sum of the two outputs.

The operation sending each linear relation to its adjoint extends to a contravariant functor

which obeys a list of properties that are summarized by saying that is a †-compact category. Because two of the operations in the Frobenius monoid are adjoints of the other two, it is a †-Frobenius monoid.

This Frobenius monoid is also special, meaning that comultiplication (in this case ) followed by multiplication (in this case ) equals the identity:

This Frobenius monoid is also commutative—and cocommutative, but for Frobenius monoids this follows from commutativity.

Starting around 2008, commutative special †-Frobenius monoids have become important in the categorical foundations of quantum theory, where they can be understood as ‘classical structures’ for quantum systems. The category of finite-dimensional Hilbert spaces and linear maps is a †-compact category, where any linear map has an adjoint given by

for all A commutative special †-Frobenius monoid in is then the same as a Hilbert space with a chosen orthonormal basis. The reason is that given an orthonormal basis for a finite-dimensional Hilbert space we can make into a commutative special †-Frobenius monoid with multiplication given by

and unit given by

The comultiplication duplicates basis states:

Conversely, any commutative special †-Frobenius monoid in arises this way.

Considerably earlier, around 1995, commutative Frobenius monoids were recognized as important in topological quantum field theory. The reason, ultimately, is that the free symmetric monoidal category on a commutative Frobenius monoid is the category with 2-dimensional oriented cobordisms as morphisms. But the free symmetric monoidal category on a commutative special Frobenius monoid was worked out even earlier: it is the category with finite sets as objects, where a morphism is an isomorphism class of cospans

This category can be made into a †-compact category in an obvious way, and then the 1-element set becomes a commutative special †-Frobenius monoid.

For all these reasons, it is interesting to find a commutative special †-Frobenius monoid lurking at the heart of control theory! However, the Frobenius monoid here has yet another property, which is more unusual. Namely, the unit followed by the counit is the identity:

We call a special Frobenius monoid that also obeys this extra law extra-special. One can check that the free symmetric monoidal category on a commutative extra-special Frobenius monoid is the category with finite sets as objects, where a morphism is an equivalence relation on the disjoint union and we compose and by letting and generate an equivalence relation on and then restricting this to

As if this were not enough, the light operations share many properties with the dark ones. In particular, these operations make into a commutative extra-special †-Frobenius monoid in a second way. In summary:

• is a bicommutative bimonoid;

• is a bicommutative bimonoid;

• is a commutative extra-special †-Frobenius monoid;

• is a commutative extra-special †-Frobenius monoid.

It should be no surprise that with all these structures built in, signal-flow diagrams are a powerful method of designing processes.

However, it is surprising that most of these structures are present in a seemingly very different context: the so-called ZX calculus, a diagrammatic formalism for working with complementary observables in quantum theory. This arises naturally when one has an -dimensional Hilbert space with two orthonormal bases that are mutually unbiased, meaning that

for all Each orthonormal basis makes into commutative special †-Frobenius monoid in Moreover, the multiplication and unit of either one of these Frobenius monoids fits together with the comultiplication and counit of the other to form a bicommutative bimonoid. So, we have all the structure present in the list above—except that these Frobenius monoids are only extra-special if is 1-dimensional.

The field is also a 1-dimensional vector space, but this is a red herring: in every finite-dimensional vector space naturally acquires all four structures listed above, since addition, zero, duplication and deletion are well-defined and obey all the relations we have discussed. Jason and I focus on in our paper simply because it generates all the objects via direct sum.

Finally, in the cap and cup are related to the light and dark operations as follows:

Note the curious factor of in the second equation, which breaks some of the symmetry we have seen so far. This equation says that two elements sum to zero if and only if Using the zigzag relations, the two equations above give

We thus see that in both additive and multiplicative inverses can be expressed in terms of the generating morphisms used in signal-flow diagrams.

Theorem 4 of our paper gives a presentation of based on the ideas just discussed. Briefly, it says that is equivalent to the symmetric monoidal category generated by an object and these morphisms:

• addition • zero • duplication • deletion • scalar multiplication for any • cup • cap

obeying these relations:

(1) is a bicommutative bimonoid;

(2) and obey the zigzag equations;

(3) is a commutative extra-special †-Frobenius monoid;

(4) is a commutative extra-special †-Frobenius monoid;

(5) the field operations of can be recovered from the generating morphisms;

(6) the generating morphisms (1)-(4) commute with scalar multiplication.

Note that item (2) makes into a †-compact category, allowing us to mention the adjoints of generating morphisms in the subsequent relations. Item (5) means that and also additive and multiplicative inverses in the field can be expressed in terms of signal-flow diagrams in the manner we have explained.

So, we have a good categorical understanding of the linear algebra used in signal flow diagrams!

Now Jason is moving ahead to apply this to some interesting problems… but that’s another story, for later.

Re: Categories in Control

All these diagrams are enough to make my head spin!

It seems to me there’s probably a 2-categorical structure here, where a 2-cell would be an inclusion of one relation inside another. Is this something you’ve looked at?

Unrelatedly, is there a categorical characterization of when a network is “stable”?