In reply to Gavin Wraith’s questions, I’ll start by reposting a few of my own summaries of what Ambjörn, Loll and Jurkiewicz have been doing. The first is from week206, written on May 10, 2004, back when I still worked on quantum gravity. It’s part of a report from a quantum gravity conference in Marseille.

I’m delighted to see some real progress on getting 4d

spacetime to emerge from nonperturbative quantum gravity:

3) Jan Ambjorn, Jerzy Jurkiewicz and Renate Loll, Emergence of a 4d world

from causal quantum gravity, available as

hep-th/0404156.

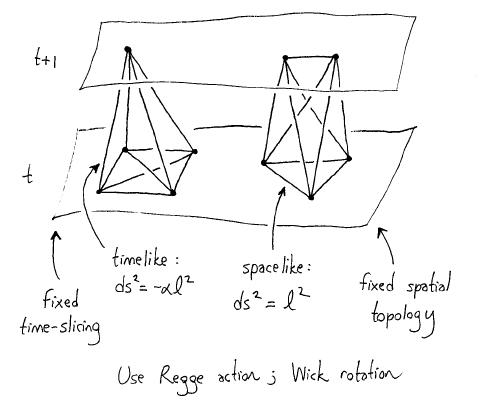

This trio of researchers have revitalized an approach called “dynamical

triangulations” where we calculate path integrals in quantum gravity by

summing over different ways of building spacetime out of little 4-simplices.

They showed that if we restrict this sum to spacetimes with a well-behaved

concept of causality, we get good results. This is a bit startling,

because after decades of work, most researchers had despaired of getting

general relativity to emerge at large distances starting from the dynamical

triangulations approach. But, these people hadn’t noticed a certain flaw

in the approach… a flaw which Loll and collaborators noticed and fixed!

If you don’t know what a path integral is, don’t worry: it’s pretty

simple. Basically, in quantum physics we can calculate the expected value

of any physical quantity by doing an average over all possible histories

of the system in question, with each history weighted by a complex number

called its “amplitude”. For a particle, a history is just a path in

space; to average over all histories is to integrate over all paths -

hence the term “path integral”. But in quantum gravity, a history is

nothing other than a SPACETIME.

Mathematically, a “spacetime” is something like a 4-dimensional

manifold

equipped with a Lorentzian metric. But it’s hard to integrate over all

of these - there are just too darn many. So, sometimes people instead

treat spacetime as made of little discrete building blocks, turning

the path integral into a sum. You can either take this seriously or treat

it as a kind of approximation. Luckily, the calculations work the same

either way!

If you’re looking to build spacetime out of some sort of discrete building

block, a handy candidate is the “4-simplex”: the 4-dimensional

analogue

of a tetrahedron. This shape is rigid once you fix the lengths of its 10

edges, which correspond to the 10 components of the metric tensor in

general relativity.

There are lots of approaches to the path integrals in quantum gravity

that start by chopping spacetime into 4-simplices. The weird special

thing about dynamical triangulations is that here we usually assume

every 4-simplex in spacetime has the same shape. The different spacetimes

arise solely from different ways of sticking the 4-simplices together.

Why such a drastic simplifying assumption? To make calculations quick

and easy! The goal is get models where you can simulate quantum geometry

on your laptop - or at least a supercomputer. The hope is that simplifying

assumptions about physics at the Planck scale will wash out and not make

much difference on large length scales.

Computations using the so-called “renormalization group flow” suggest

that this hope is true if the path integral is dominated by spacetimes

that look, when viewed from afar, almost like 4d manifolds with smooth

metrics. Given this, it seems we’re bound to get general relativity at

large distance scales - perhaps with a nonzero cosmological constant, and

perhaps including various forms of matter.

Unfortunately, in all previous dynamical triangulation models, the path

integral was not

dominated by spacetimes that look like nice 4d manifolds

from afar! Depending on the details, one either got a “crumpled

phase”

dominated by spacetimes where almost all the 4-simplices touch each other,

or a “branched polymer phase” dominated by spacetimes where

the 4-simplices

form treelike structures. There’s a transition between these two phases,

but unfortunately it seems to be a 1st-order phase transition - not the

sort we can get anything useful out of. For a nice review of these

calculations, see:

4) Renate Loll, Discrete approaches to quantum gravity in four dimensions,

available as

gr-qc/9805049

or as a website at Living Reviews in Relativity,

http://www.livingreviews.org/Articles/Volume1/1998-13loll/

Luckily, all these calculations shared a common flaw!

Computer calculations of path integrals become a lot easier if instead of

assigning a complex “amplitude” to each history, we assign it a

positive

real number: a “relative probability”. The basic reason is

that unlike

positive real numbers, complex numbers can cancel out when you sum them!

When we have relative probabilities, it’s the highly probable

histories

that contribute most to the expected value of any physical quantity. We

can use something called the “Metropolis algorithm” to spot

these highly

probable histories and spend most of our time focusing on them.

This doesn’t work when we have complex amplitudes, since even a history

with a big amplitude can be canceled out by a nearby history with the

opposite big amplitude! Indeed, this happens all the time. So, instead

of histories with big amplitudes, it’s the bunches of histories that

happen not to completely cancel out that really matter. Nobody knows an

efficient general-purpose algorithm to deal with this!

For this reason, physicists often use a trick called “Wick rotation”

that converts amplitudes to relative probabilities. To do this trick, we

just replace time by imaginary time! In other words, wherever we see the

variable “t” for time in any formula, we replace it

by “it”. Magically,

this often does the job: our amplitudes turn into relative probabilities!

We then go ahead and calculate stuff. Then we take this stuff and go

back and replace “it” everywhere by “t” to get our final

answers.

While the deep inner meaning of this trick is mysterious, it can be

justified in a wide variety of contexts using the “Osterwalder-Schrader

theorem”. Here’s a pretty general version of this theorem, suitable

for quantum gravity:

5) Abhay Ashtekar, Donald Marolf, Jose Mourao and Thomas Thiemann,

Constructing Hamiltonian quantum theories from path integrals in a

diffeomorphism invariant context,

Class. Quant. Grav. 17 (2000) 4919-4940. Also

available as

quant-ph/9904094.

People use Wick rotation in all work on dynamical triangulations.

Unfortunately, this is not a context where you can justify this trick

by appealing to the Osterwalder-Schrader theorem. The problem is that

there’s no good notion of a time coordinate “t” on your typical

spacetime built by sticking together a bunch of 4-simplices!

The new work by Ambjorn, Jurkiewiecz and Loll deals with this by

restricting to spacetimes that do have a time coordinate. More

precisely, they fix a 3-dimensional manifold and consider all possible

triangulations of this manifold by regular tetrahedra. These are the

allowed “slices” of spacetime - they represent different possible

geometries of space at a given time. They then consider spacetimes

having slices of this form joined together by 4-simplices in a few

simple ways.

The slicing gives a preferred time parameter “t”. On the one hand

this goes against our desire in general relativity to avoid a preferred time

coordinate - but on the other hand, it allows Wick rotation. So, they

can use the Metropolis algorithm to compute things to their hearts’

content and then replace “it” by “t” at the end.

When they do this, they get convincing good evidence that the spacetimes

which dominate the path integral look approximately like nice smooth

4-dimensional manifolds at large distances! Take a look at their graphs

and pictures - a picture is worth a thousand words.

Naturally, what I’d like to do is use their work to develop some spin

foam models with better physical behavior than the ones we have so far.

If you look at my talk you can see some of the problems we’ve encountered:

6) John Baez, Spin foam models, talk at Non Perturbative Quantum Gravity:

Loops and Spin Foams, May 4, 2004, transparencies available at

http://math.ucr.edu/home/baez/spin_foam_models/

Now that Loll and her collaborators have gotten something that works,

we can try to fiddle around and make it more elegant while making sure it

still works. In particular, I’m hoping we can get well-behaved models

that don’t introduce a preferred time coordinate as long as they rule out

“topology change” - that is, slicings where the topology of space

changes. After all, the Osterwalder-Schrader theorem doesn’t require a preferred time coordinate, just any time coordinate together with good behavior

under change of time coordinate. For this we mainly need to rule out

topology change. Moreover, Loll and her collaborators have argued in 2d

toy models that topology change is one thing that makes models go bad: the

path integral can get dominated by spacetimes where “baby universes”

keep branching off the main one:

7) Jan Ambjorn, Jerzy Jurkiewicz and Renate Loll, Non-perturbative

Lorentzian quantum gravity, causality and topology change, Nucl. Phys.

B536 (1998) 407-434. Also available as hep-th/9805108.

Renate Loll and W. Westra, Space-time foam in 2d and the sum over

topologies, Acta Phys. Polon. B34 (2003) 4997-5008. Also available as

hep-th/0309012.

By the way, it’s also good reading about their 3d model:

8) Jan Ambjorn, Jerzy Jurkiewicz and Renate Loll, Non-perturbative 3d

Lorentzian quantum gravity, Phys.Rev. D64 (2001) 044011. Also available

as hep-th/0011276.

and for a general review, try this:

9) Renate Loll, A discrete history of the Lorentzian path integral,

Lecture Notes in Physics 631, Springer, Berlin, 2003, pp. 137-171.

Also available as

hep-th/0212340.

Re: Causality in Discrete Models of Spacetime

In reply to Gavin Wraith’s questions, I’ll start by reposting a few of my own summaries of what Ambjörn, Loll and Jurkiewicz have been doing. The first is from week206, written on May 10, 2004, back when I still worked on quantum gravity. It’s part of a report from a quantum gravity conference in Marseille.