This Week’s Finds in Mathematical Physics (Week 282)

Posted by John Baez

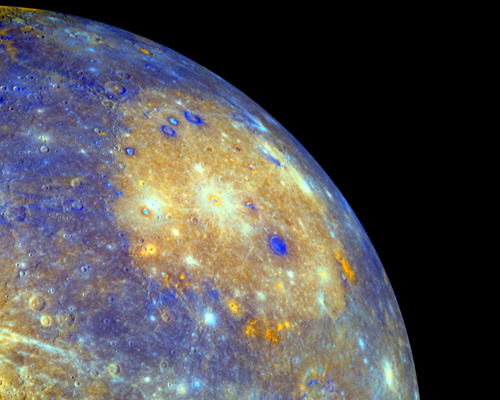

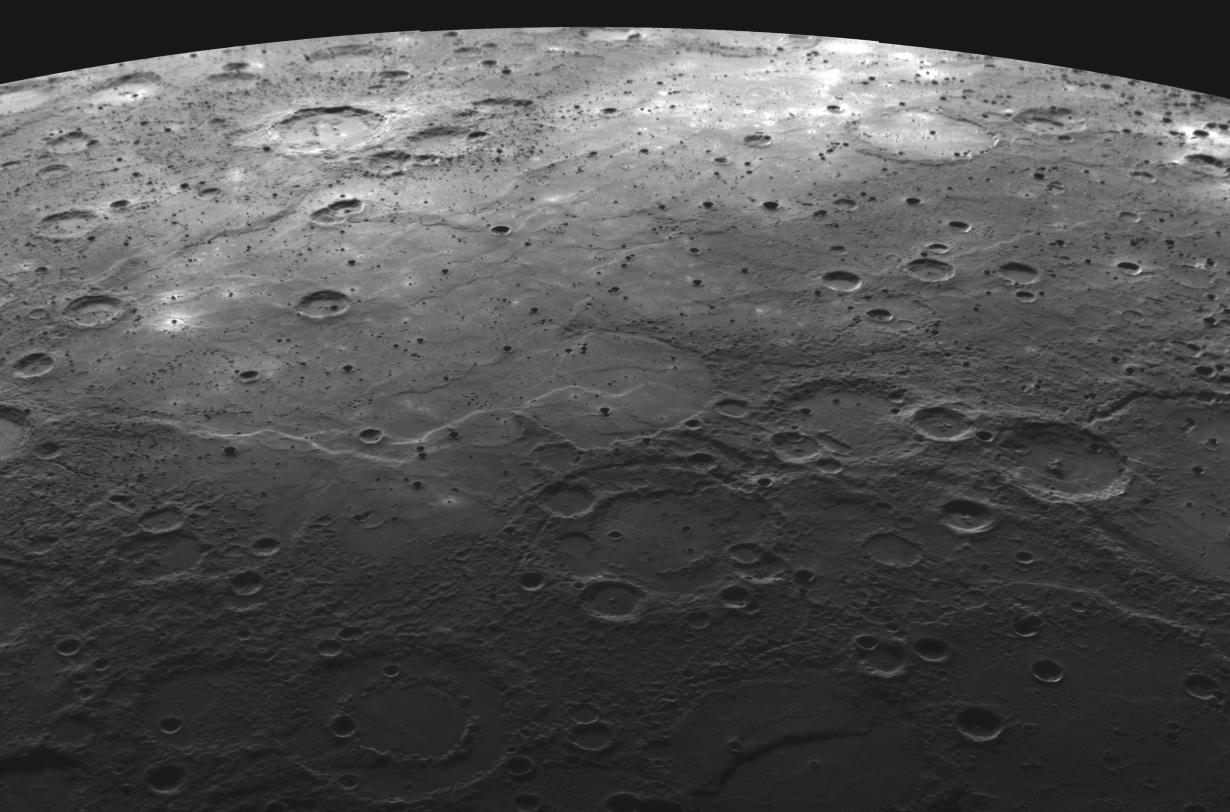

In week282 of This Week’s Finds, visit Mercury:

Learn how this planet’s powerful magnetic field interacts with the solar wind to produce flux transfer events and plasmoids. Then read about the web of connections between associative, commutative, Lie and Poisson algebras, and how this relates to quantization. In the process, you’ll meet linear operads, their generating functions, and Stirling numbers of the first kind!

Posted at October 29, 2009 9:34 PM UTC

Re: This Week’s Finds in Mathematical Physics (Week 282)

Also, you might enjoy answering these questions, most of which I haven’t tried

Here’s another: if gr(Assoc) = Poisson, what is the meaning of the Rees and blowup algebras associated to this filtration?

(Given a filtration R = R_0 > R_1 > …, e.g. by powers of I, you can look at the subring of R[t] that has t^n R_n in the nth degree piece; that’s the blowup algebra. If you include t^{-n} R in the negative powers, that’s the Rees algebra. If you mod out Rees by (t - c), you get R for any nonzero c, and gr R for c=0.)